AI software can now complete basically any task, except fully understand what we want it to do! Using software today requires that you learn how its engineer thinks, which is as unnatural as it sounds.

Through its relatively short history, software has already been liberated twice: first into programs that could be reused, and then into interfaces that non-programmers could use. We now face the third great liberation: software that can be created directly through intent alone. How will we achieve it?

The Program

When Alan Turing worked on cracking the Enigma code in WWII, computers were single devices for single computations. Other early computers were programmed by flipping switches and running cables. It could take days to change what the computer did.

Programs were so tightly bound to the hardware that there was no meaningful distinction between the two.

At this point in history, it’s hard to say there was software at all. There weren’t software engineers, because running a computation meant modifying the hardware. If you were working with a computer, you were a computer engineer.

There was no single inventor of the software program; it came about gradually. The Harvard Mark 1 used punch cards for configuration, and ENIAC introduced early function reuse. The idea of the program solidified with the punch card systems of IBM in the 1950s. To work with a punch card computer, the operator loaded the program deck of cards, then they loaded your data deck. The computer performed the job and punched the result onto output cards.

This was software reuse. For the first time, the person that authored a program was not the same as the person who used it. The first software engineers! However, users were also trained engineers, given how difficult it was to create the data deck. Can you imagine spending weeks poring over the code and necessary input parameters before clicking on today’s software?

The interface changed this.

The Interface

As long as using a computer required advanced training, they were useful to only a tiny fraction of people. Better interfaces over time steadily expanded who could use computers.

In the 1960s, we had the Command-Line Interface (CLI). Xerox famously invented much of the Graphical User Interface (GUI), and Apple popularized it in the 1980s. The GUI improved over time, especially with the touch-first interface of the iPhone. Now toddlers happily navigate iPad software—for good or ill.

Toddlers are not, however, writing whatever new software they’d like to have; they can only use what already exists. Just like the rest of us! Even software engineers primarily use other people’s software for their work. Is this about to change?

The Intent

When you can only use software written by someone else, it’s never going to be exactly what you want. We are all stuck making do or doing without.

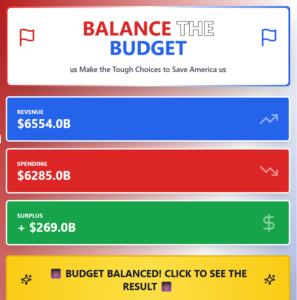

But we’re shockingly close to being able to change that. Claude Artifacts, for example, let you describe what you want to build. I made this game to show my teenager why balancing the U.S. Federal Budget is difficult:

We now call this “vibe coding,” creating software exclusively through conversation. But to vibe code beyond simple web apps, you tend to need to know a lot about software architecture.

We aren’t there yet — we still can’t convert what we imagine into action without training or endless trial-and-error. Even if you have training, I can nearly guarantee you’re not using AI ambitiously enough.

Liberating software from requiring software developers

I’ve written before about GDPval and its highly detailed definitions of expert tasks. AI can be incredibly valuable when we fully specify the work we have in mind without any ambiguity. Once we do so, the AI will complete it (GPT-5.4 now beats or ties human experts 83% of the time on the expert tasks GDPval). If we could do it, we would author the “software” just when we need it. Unfortunately, I don’t think we’ll ever be able to write in enough detail on our own.

I have some ideas that may help us out.

Asking questions

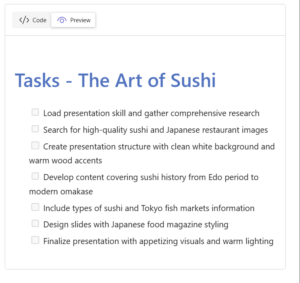

Communicating with anybody is hard. Without meaning to, we assume the person we’re talking to understands much more than we actually verbalize. It’s worse with chat interfaces, because we don’t have tone and body language to help communicate our intent. Perhaps it would be better to be interviewed by the AI?

Research in ChatGPT is the first product I remember that would ask you a few questions after you submitted a prompt. Well-crafted questions remove ambiguity in the definition of the work. Meanwhile, asking poor questions is annoying and not helpful at all.

Surely AI can act as an expert interviewer, just as it can any other knowledge task. Is asking questions like Barbara Walters the right way to understand the user’s intent?

Showing the detail

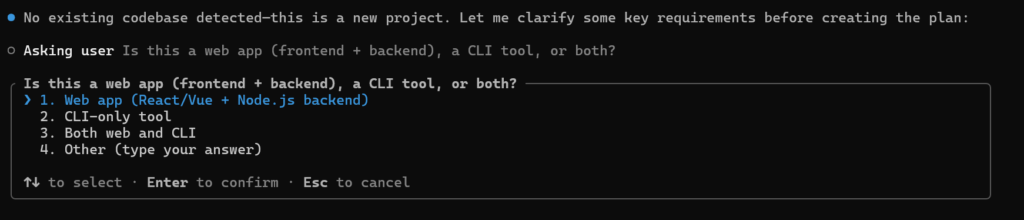

Plan mode in coding agents is great. You chat and answer questions while the model explores the code base. When it has enough information, it shows you the plan. Claude Code tends to write plans that are several pages long.

If you read the plan and agree to it, it’s like you authored one of those multi-page prompts in GDPVal. But actually reading the plan is tedious. It also tends to be very dense and technical. I suspect that few users read the entire thing; some coding agents don’t even show it all by default. Even fewer users are going to edit it.

Is there a way to show the detail more visually? And if so, would it help?

Watching and changing

There’s little more disappointing than having AI work for an hour, then looking at the result and realizing it is useless.

The latest agentic products now let you send additional messages while they work. What you add changes what the AI builds. So if it is on the wrong track or misunderstood your intent, you can, in theory, correct it before it finishes.

Unfortunately, mostly automated systems make people complacent. Who wants to watch an AI work for an hour? One way this could be addressed is simply making the AI dramatically faster. If it creates the entire output in 3 seconds, it’s now trivial to review it and ask for updates. AI is indeed getting faster, but we aren’t there yet. More viable in my mind is notifying the user to check in and review as major steps of the work complete.

Emancipating the intent

I don’t know if one of my three hypotheses will solve this dilemma. What I do know is that solving this last challenge will dramatically change the world of software.

Programs created the profession of software engineering, and interfaces let everyone else use it. Once anyone at all can get a computer to properly understand them, it won’t seem like we are using software at all.

What AI products do you think best understand your intent? Reply where you found this post with two sentences: what you were trying, and where the AI nailed it… or what it got horribly wrong.